The AI backlash begins: artists could protect against plagiarism with this powerful tool

A team of researchers from the University of Chicago has developed a tool intended to help online artists “fight back against AI companies” by, essentially, inserting poison pills into their original work.

The software, called Nightshade after the family of poisonous plants, would introduce poisonous pixels into digital art that mess with how generative AIs interpret it. The way models like Stable Diffusion work is that they scour the internet and collect as many images as possible to use as training data. What Nightshade is doing is taking advantage of this ‘security problem’. As explained by the MIT Technology Review, these “poisoned data samples can manipulate models to” learn the wrong thing. For example, it can see an image of a dog as a cat or a car as a cow.

Poison tactics

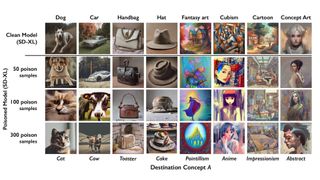

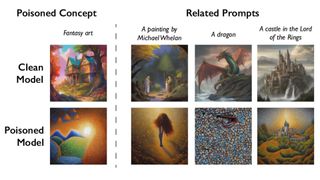

As part of the testing phase, the team fed Stable Diffusion infected content and then “challenged it to create images of dogs.” After receiving fifty samples, the AI generated photos of deformed dogs with six legs. After 100 you start to see something that looks like a cat. Once there were 300, dogs became full-fledged cats. Below you can see the other tests.

The report goes on to say that Nightshade also influences “tangentially related” ideas, because generative AIs are good “at making connections between words.” Messing around with the word “dog” confuses similar concepts like puppy, husky, or wolf. This also applies to art styles.

It is possible for AI companies to remove the toxic pixels. But as the MIT post points out, it is “very difficult to remove them.” Developers should “find and remove every corrupted example.” To give you an idea of how difficult this would be, a 1080p image has over two million pixels. As if that wasn’t hard enough, these models are “trained on billions of data samples.” So imagine looking through a sea of pixels to find the handful of stuff with the AI engine.

At least, that’s the idea. Nightshade is still in its early stages. Currently, the technology has been “submitted for peer review at (the) Usenix computer security conference.” MIT Technology Review managed to get a sneak peek.

Future efforts

We contacted team leader, Professor Ben Y. Zhao of the University of Chicago, with several questions.

He told us that they plan to “deploy and release Nightshade for public use.” It will be part of Glaze as an “optional feature”. Glaze, if you’re not familiar with it, is another tool Zhao’s team developed that gives artists the ability to “mask their own personal style” and prevent it from being taken over by artificial intelligence. He also hopes to make Nightshade open source so others can make their own poison.

In addition, we asked Professor Zhao if there are any plans to make a Nightshade for video and literature. There are several literary authors at the moment Sue OpenAI and claim the program is “using their copyrighted works without permission.” He states that developing toxic software for other works will be a major undertaking, “as those domains are very different from static images. The team has “no plans yet to address these.” Hopefully someday soon.

So far, the initial reactions to Nightshade have been positive. Junfeng Yang, a professor of computer science at Columbia University, told Technology Review that this would allow AI developers to “better respect artists’ rights.” Maybe even be willing to pay royalties.

If you’re interested in taking up illustration as a hobby, check out TechRadar’s list of the best digital art and drawing software in 2023.